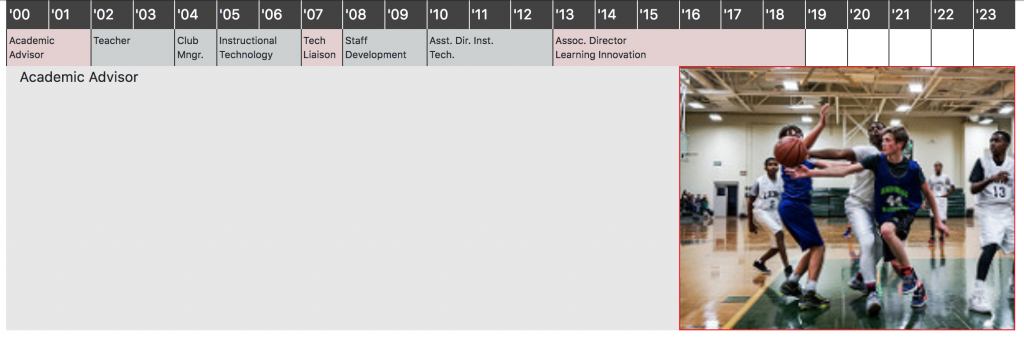

Jujutsu1 is a martial art focused on using your opponent’s momentum against them– clever redirection of force rather than trying to meet it directly. This seems like it might be an option for some of today’s social media woes where people are trying to continue to take advantage of the good aspects of these tools/communities […]

Social Media Jujutsu