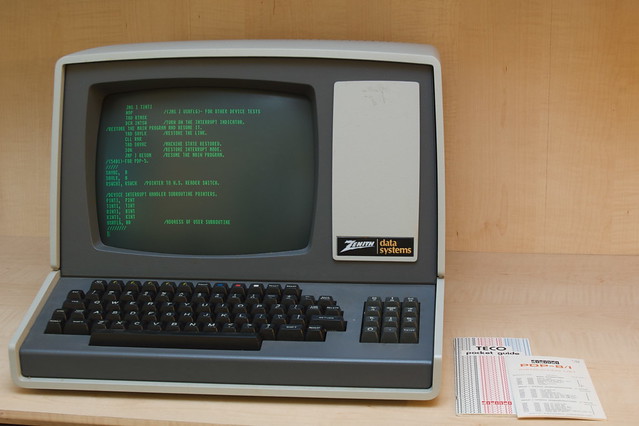

flickr photo shared by ajmexico under a Creative Commons ( BY ) license

I’m trying to step up my programming game a bit.1 APIs are also getting more and more accessible to jokers like myself.2 (In this case I also use php, cron, and some regex.) All of this should make Alan very proud.

But I’m relatively terrible at doing things without a purpose. Luckily one wandered in on Tuesday. A faculty member who I’ve worked with a few times before came in and asked if there was any way to grab Instagram data for a project on social media and health that focused on vaping and ecigs. I’m not one to look a gift project in the mouth so I said I’d take a stab at it.

Step one was to check out Instagram’s API3– in particular I wanted to see the tag endpoints. Those are URLs that give you access to JSON data. To get at these you need to register as an Instagram developer and register a client. This is a pretty straightforward process.

After that I browsed around GitHub to see what might already exist. This got me to the Instagram PHP API. I always start by wandering GitHub much like I start my WordPress work by looking at plugins first. It took me a long time to do the latter regularly but I’ve been better at applying it to GitHub (and Stack Overflow).

As part of my learning, I decided I’d also try installing Scotch Box like Mark suggested a while back. That gives me a virtual machine on my laptop that I can maul without fear of destroying anything important. If I wreck it, I can just spin up a new one. I did it. It works and is pretty neat. I did try a number of things that I would not have risked on my own computer or a live server (which were my normal methods prior to having this option). I also got hopelessly lost at one point after ssh-ing into the virtual box and messing around quite a bit.4

So for me, I love kind people like cosenary who have functional examples. It lets me isolate variables. I was able to put my Instagram keys in and getting a working demo. That means authentication works any subsequent issues are my fault. It’s always good to know who’s to blame.

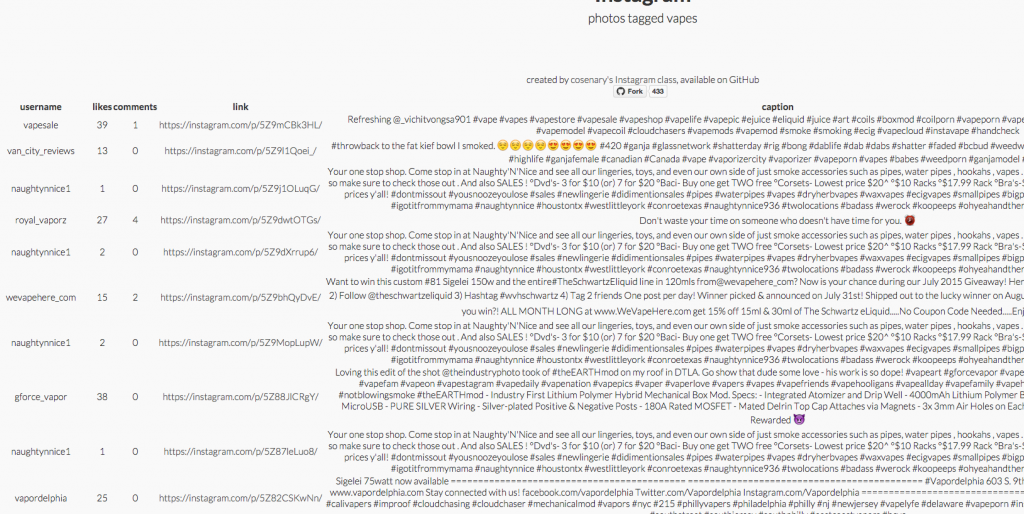

From there it was a matter of shifting things to do what I needed which was essentially return a table of data about vape related Instagrams. Instead of

$result = $instagram->getPopularMedia(); (which returns popular things of all kinds), I wanted $result = $instagram->getTagMedia(‘vapes’, ’40’); which will return things tagged “vapes” and, sadly, will return only 20 results before requiring pagination (even though I asked for 40- it will, however, give me less than 20 if I ask).

Get the Data

That works but it returns the same grid layout of pretty pictures that the original example does. I like it but I don’t need it. I just want the data. You can see what I ended up doing below. It builds a table. There’s not much commenting below but I think it is fairly straight forward.

<?php

foreach ($result->data as $media) {

$content = '<tr>';

// get the data

$username = $media->user->username;

$likes = $media->likes->count;

$comments = $media->comments->count;

$link = $media->link;

$caption = $media->caption->text;

$filter = $media->filter;

//build the table

$content = "

<td>{$username}</td>

<td>{$likes}</td>

<td>{$comments}</td>

<td><a href=\"{$link}\">{$link}</a></td>

<td>{$caption}</td>

<td>{$filter}</td>

";

// output table

echo $content . '</tr>';

}

?>

Save the Data

So for the moment, I’m getting what I want (although not as much of it). I am not quite ready to parse out the stuff for pagination (there’s some do_while loop that I don’t get involved). Anyway, my temporary work around is to archive what I get and to use a cron task to pull down the 20 posts every hour and save them as a CSV file.

It’s essentially the same start at the code above because I want that same data. I learned a few other tricks though. One is that I don’t have to hand build a csv file. PHP has an fputcsv option. Setting it like so, writes it to a file called vapedata.csv and the a+ marker appends the new stuff to the end of the file rather than overwriting it.

Notice I’m splitting the CSV elements with ? instead of a comma or a pipe or something like that. I had to do that because people who write about vaping include all kinds of crazy punctuation and other garbage in their descriptions and between commas, colons, random pipes (this kind |, not this kind). You can see that character being used on the array_push line and again in the fputcsv line.

<?php

$list = array();

foreach ($result->data as $media) {

$username = $media->user->username;

$likes = $media->likes->count;

$comments = $media->comments->count;

$link = $media->link;

$caption = $media->caption->text;

$filter = $media->filter;

array_push($list, $username . '?' . $likes . '?' . $comments . '?' . $link . '?"' . $caption . '"?' . $filter);

}

$file = fopen("vapedata.csv","a+");

foreach ($list as $line)

{

fputcsv($file,explode('?',$line));

}

fclose($file); ?>

Do It Every Hour

That code runs every time the page opens. My fairly lame way to do this is to call the page via cron every hour. I’m doing that like this. env EDITOR=nano crontab -e open up the cron editor on the Mac via terminal (although I’m using iTerm thanks to Mark again). 00 09-23 * * * curl http://192.168.33.10/data.php should call my page on the nifty virtual server every hour from 9AM – 11:00PM.

A Bit of Regex

I wanted to get a count of the hashtags used per Instagram. There’s probably a way to do this on the PHP side but I knew how to do it quickly in a spreadsheet. This formula =REGEXREPLACE(E2,”[^#]”,””) takes the entire comment text and replaces everything except the hashtags. Now I can just =len(J2) to give me the number of characters in the resulting cell. It’s not perfect because someone could just decorate with octothorpes but it works pretty well and is so much better than counting by hand.

Are you talking in triples yet? And while my charisma may not be enough, I think my dashing good looks may actually be the small bit you need.this is very, very cool. And the blog as lab notebook for learning code is really cool, I have loved the mix of photography and weird colored code snippets I know nothing about, but really believe are the future 🙂

Way beyond proud, dude. I gotta find some time to catch up with all this new code chops you are doing.

You might be able to do the counting of # with something like count_chars()

Thanks Alan.

I ended up using

$hashcount = substr_count($caption, '#');$hashtrue = (boolval($hashcount) ? 'true' : 'false');

Which gives me the number of hashes and and true count if there’s one or more.

Hey, it looks like, from your PHP snippet up there, that you’re able to pull info on the filter used for a post? I’m looking for a way to get at that, I’m not seeing it in the API or the wrapper though, am not seeing it somewhere?

You can get the filter, at least in the media query responses. You can see filter as one of the json elements in the sample query response.

Hey there! I am looking to hire someone to be able to retrieve following and follower counts of a username ever 24 hours into a spreadsheet/google sheet – would anyone know how to do this? Please contact me brody@embeecreatives.com

https://github.com/raiym/instagram-php-scraper this php library allows you to scrape public info without auth

Hey! That’s awesome. Thanks!